As enterprises move from scripted automation to autonomous systems, one thing becomes clear very quickly: building Agentic AI testing is only half the challenge. The other half is making sure it behaves reliably in the real world.

That is where Agentic AI testing becomes critical.

Traditional QA methods were designed for predictable software. Agentic systems are different. They reason across context, make decisions dynamically, call tools, respond in natural language, and often operate in real time across voice and text channels. That means testing them requires more than static scripts and predefined flows. It requires a way to evaluate how they perform under real user behavior, real ambiguity, and real business conditions.

For enterprises, this is not just a technical issue. It is a trust issue. If an AI agent misinterprets intent, overloads a user with information, or fails at a critical moment, the result is poor customer experience, lower containment, and slower adoption.

What is Agentic AI testing?

Agentic AI testing is the practice of evaluating autonomous AI systems in realistic environments so teams can verify not only whether an agent works, but how well it makes decisions, handles uncertainty, and supports successful outcomes.

Unlike traditional automation testing, which checks whether a scripted flow passes or fails, Agentic AI testing looks at broader dimensions such as:

- whether the agent interprets goals correctly

- how it handles incomplete or messy input

- whether it recovers gracefully from ambiguity

- how it performs across changing contexts

- whether the experience feels natural, clear, and efficient for the end user

In other words, Agentic AI testing is not just about validating logic. It is about validating behavior.

Why traditional testing falls short

Classic test automation works well when systems follow fixed rules. But Agentic AI does not behave like a rigid decision tree.

In older conversational systems, designers had to build the exact path a user should take. If the user strayed from that path, the experience often failed. In modern agentic systems, the design challenge is different. The goal is no longer to hardcode every route. The goal is to shape the environment, define guardrails, and help the agent succeed in a wide range of situations.

This shift changes the testing model too.

A scripted test might confirm that an agent responds correctly to a specific prompt. But real users do not speak in perfect prompts. They interrupt themselves, change their mind halfway through a sentence, omit key information, and assume the system understands context that was never explicitly stated.

That gap between controlled test cases and real-world behavior is exactly why enterprises need a more realistic approach to Agentic AI testing.

The core challenges in Agentic AI testing

1. Real users are unpredictable

Users rarely communicate in neat, structured steps. They speak in fragments, use vague references, and often revise their request while they are speaking. For voice agents especially, this is the norm, not the exception.

If testing only happens in clean, scripted conditions, teams end up optimizing for the lab instead of the real world.

2. Conversation quality matters as much as task completion

A successful agent does more than produce the right answer. It has to deliver that answer in a way people can follow, trust, and act on.

This is especially important in voice AI. Users cannot skim a spoken answer the way they can scan text on a screen. Information density, timing, pause placement, and wording all influence whether the interaction feels easy or exhausting.

3. Agent behavior changes with context

Agentic systems adapt. That is one of their biggest strengths, but it also makes testing harder. The same request can lead to different outputs depending on user history, connected systems, prior turns, or current business logic.

That means QA has to move beyond single expected outputs and toward testing ranges of acceptable behavior.

4. Failure is often subtle

Not every failure looks like a crash or a clear error. Sometimes the agent technically answers the question, but does it in a confusing way. Sometimes it misses the user’s underlying intent. Sometimes it gives too much detail, too quickly, or uses wording that increases friction.

These softer failures are often the ones that hurt adoption most, yet they are the hardest to catch with conventional testing.

What effective Agentic AI testing should include

To be useful in the enterprise, agentic ai testing needs to cover more than pass-fail validation. It should help teams improve quality continuously across the full interaction experience.

A strong testing approach should evaluate:

- Realism: Test against the way people actually communicate, not the way teams wish they would communicate.

- Context handling: Measure whether the agent understands references, ambiguity, interruptions, and changing goals.

- Clarity: Assess whether responses are easy to understand in real time, especially in voice experiences.

- Accuracy: Validate that the agent identifies intent correctly and produces the right outcome.

- Recovery: Test how the system responds when information is incomplete, unclear, or contradictory.

- Iteration speed: Enable teams to identify issues and refine behavior quickly, without waiting for late-stage deployment cycles.

Why real-time testing matters

The rise of agentic systems makes real-time testing increasingly important.

When teams can test interactions in real time, they move beyond assumptions and into direct observation. They can hear how the conversation actually sounds, notice where users may get confused, and refine the interaction before problems reach production.

This is especially valuable for voice-based enterprise AI, where the quality of the experience depends on much more than the wording of the response. Latency, speech recognition, pacing, pronunciation, and prosody all influence whether the interaction feels natural and effective.

Real-time testing helps teams answer practical questions such as:

- Does this response sound clear when spoken aloud?

- Is the pacing too dense for a caller to follow?

- Does the agent recover naturally when the user changes direction?

- Are there hidden assumptions in the flow that only show up in live interaction?

- Does the conversation feel human and helpful, or mechanical and rigid?

These are not edge concerns. They are central to whether enterprise AI succeeds.

Briefly: how Teneo AI approaches real-time testing

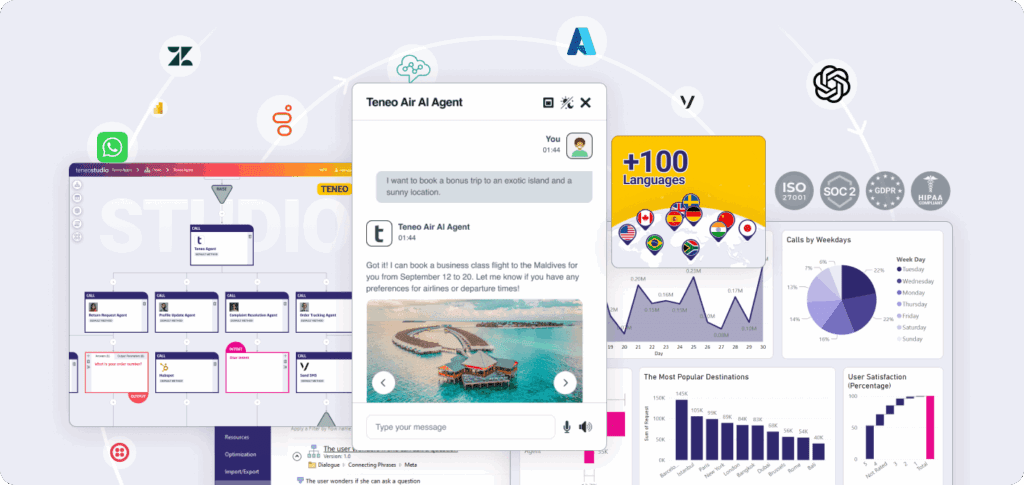

At Teneo, we have introduced Real-Time Testing for Agentic AI to help teams develop and optimize voice and conversational experiences in a native real-time environment.

This matters because many of the hardest issues in enterprise AI only appear when an interaction is experienced live. Real users bring unpredictable expectations. Voice experiences introduce cognitive load that is difficult to judge from text alone. And natural-sounding delivery depends on timing, rhythm, and emphasis, not just content.

With Teneo’s real-time testing capabilities, teams can:

- understand actual speech patterns immediately

- identify assumptions users bring into the conversation

- refine dialogue flows based on real behavior rather than theory

- test how your AI Agents feels in live voice interactions

- optimize for naturalness, clarity, and task completion

Combined with Teneo’s broader enterprise capabilities, this creates a faster feedback loop for teams building Agentic AI that has to perform reliably at scale. In addition to being able to be integrated to powerful LLMs like Mistral AI, OpenAI GPT 5.4 and Google Gemini.

The business value of Agentic AI testing

For enterprise teams, better testing is not just about reducing bugs. It affects the full economics of AI delivery.

- Faster iteration: When teams can test and refine behavior in realistic conditions early, they reduce the number of slow, expensive deployment cycles.

- Better customer experience: Testing for clarity, naturalness, and recovery leads to interactions that feel more human and less frustrating.

- Higher accuracy: A more realistic testing process helps surface contextual errors, intent mismatches, and communication issues before launch.

- Lower operational risk: Enterprises can catch failure modes earlier, improving trust and reducing the cost of poor live experiences.

- Stronger ROI: When agents perform better from the start, organizations see faster time to value and lower maintenance overhead. Learn more about ROI in our ROI calculator here.

A shift in mindset for enterprise teams

The biggest change with Agentic AI testing is not just tooling. It is mindset.

Testing can no longer be treated as a final checkpoint after the build phase. For agentic systems, testing must become part of the design loop itself.

Teams need to evaluate not only whether the system works, but whether it works for real people in real situations. That means testing early, testing continuously, and testing in ways that reflect how users actually behave.

The organizations that do this well will not just ship faster. They will build AI experiences that users trust.

Final thoughts

As agentic systems become more autonomous, the quality bar rises. Enterprises need more than scripted validation. They need a way to test behavior, judgment, clarity, and real-world performance.

That is why Agentic AI testing is becoming a foundational capability for modern AI teams.

The future of enterprise AI will belong to organizations that can move quickly without sacrificing reliability. Real-time testing is a key part of making that possible.

And as more enterprises bring voice and conversational agents into customer-facing workflows, the ability to test in realistic, live conditions will increasingly separate successful deployments from failed pilots.