Test your bot with Cyara Botium

While auto-testing in Teneo allows you to perform quality assurance tasks during the development and maintenance of a Teneo solution, you may wish to perform end-to-end tests before you release your bot to ensure the bot behaves as intended, the answers provided are as expected and the integrations work as anticipated.

Botium is a quality assurance framework that was specifically developed for regression testing of bots, including Teneo bots. Botium is a third party product developed by Cyara.

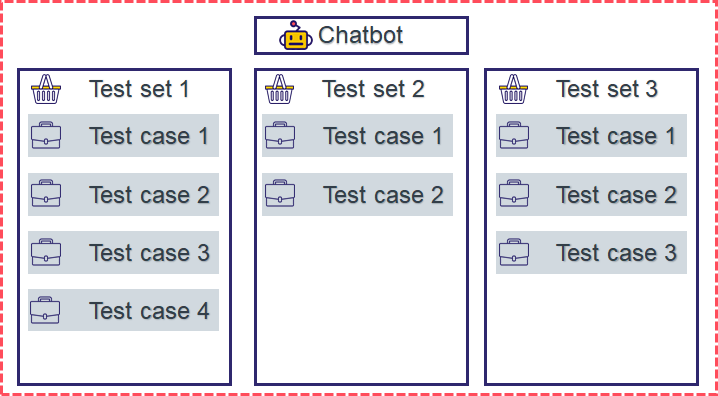

Test projects in Botium

A Botium test project typically contains the following components:

- A Bot which in our case is the Teneo engine that you will perform the tests against

- One or more Test Sets, which define properties used during testing and which contain...

- One or more Test Cases, multi-step dialogues that the bot is supposed to follow

This page will guide you through setting up a Botium project and run tests against a Teneo bot.

Prerequisites

Sign up to Botium

You will need access to a Botium server from Cyara.

Published Teneo solution

You need to know the URL of the published Teneo solution that you want to test. If you haven't published your solution yet or don't know the URL of your bot, see Publish your bot to learn more.

Setup instructions

Make sure you've logged in to your Botium instance through your favorite browser before you follow the setup instructions.

Register a bot

First, we are going to connect a Teneo chatbot to the Botium instance.

- Go to 'Chatbots' in the menu to the left

- Then click on 'Register New Chatbot'

- Give the chatbot a name

- Choose the 'Botium connector for Teneo' from the 'Connector/Chatbot Technology' dropdown menu

- Add the published endpoint URL to the field 'Teneo chatbot endpoint URL'

Create a new test set

Now we are going to set up a test set in which the test cases are going to be stored.

- Go to 'Test Sets' in the menu to the left

- Click 'Start Test Set From Scratch'

- Give the test set a name and an optional description

- Click 'Continue To Test Case Designer', to save the test set

Add a test case

To create a test case, we're going to use Botium's live chat functionality which will allow you to have a dialog with your bot directly from within Botium and save that dialog as a test case.

- Inside the test set, click on the 'Record Live Chat' button

- Choose the bot that you want to record the live chat with, note that 'Echo Bot' and 'I am Botium' are default bots in Botium

- Botium will automatically connect to the bot and once connected, Botium will notify you through a pop up at the bottom of your screen

- Now, have a dialog with your bot

- When you are happy with your dialog, click on 'Save Test Case'

- Give the test case a name and click 'OK' to save the test case

- Once saved, a notification will pop up at the bottom of the screen telling you that the test case has been saved

- Click 'Cancel' to return to the test set

The record live chat functionality is available from multiple views in Botium, you can find them here:

- You bot configuration view

- Test set view

- Test project view

Set up a Test Project

Let's set up a test project that ties your bot, test set, and test case together. We're going to use the 'Quickstart' wizard which allows us to select which bot we want to test against, and which test set(s) that we want to bind to the test project.

Create a test project an select your Teneo bot

- Click on ‘Quickstart’ in the menu to the left

- Give the test project a name

- Click on 'Connect to bot or enter new connection settings' and choose the bot that you connected to Botium before

- Click ‘NEXT’

Select test set(s)

In the second step, you'll assign the test set(s) that you want to bind to the test project.

- Untick the 'Connect to Chatbot or enter new connection settings'

- Go to the search field 'Select from registered Test Set(s)' and search for the test set that you created before and assign it

- Click 'NEXT'

Select test environments

In the last step, we'll leave everything at default, and click 'Save'.

You've now set up a test project that contains:

- A Teneo chatbot that we can interact with and test

- A test set in which we can store our test cases

- A test case which is the dialog path that the chatbot is supposed to follow

Note that the test project is a static entity, you cannot add additional test sets after a test project has been set up.

Run your first test session

You can start testing your chatbot directly from the test project by clicking on the 'Start Test Session Now' button. Once you’ve started the test session, Botium will present the test results. Here you can see which test cases failed and which passed. You can also expand the test cases to inspect the dialogs.

If you'd like to run additional tests, navigate back to your test project and click 'Start test session now'.

Interpret failed tests

Botium provides plenty of information when a test case fails:

- The user input that was sent to the chatbot

- The chatbot's response

- An error message displaying which asserter failed and on what line the test case failed

It's possible to get even more information per transaction by clicking on the <> symbol found next to the user inputs and the chatbot's responses. This will open Botium code which allows you to see in detail what was sent to and received from your chatbot.

Advanced Options

In addition to testing, if the bot gave the correct answer for a particular input, it's important that we can test all parts of a chatbot to make sure they are functioning as expected. This includes extra parameters that may be sent to the bot or additional details that are included in the bot's response.

We will be using Botium's 'Source editor' to explain some of the advanced testing options in Botium. You can modify the source code of a test case by opening a test case and clicking the 'Open in source editor' at the bottom of the test scenario.

The source code of a test case typically looks like this:

User: Hello!

Bot: Hello. It's good to see you!

User: What time is it?

Bot: My watch says it's time to get some exercise!

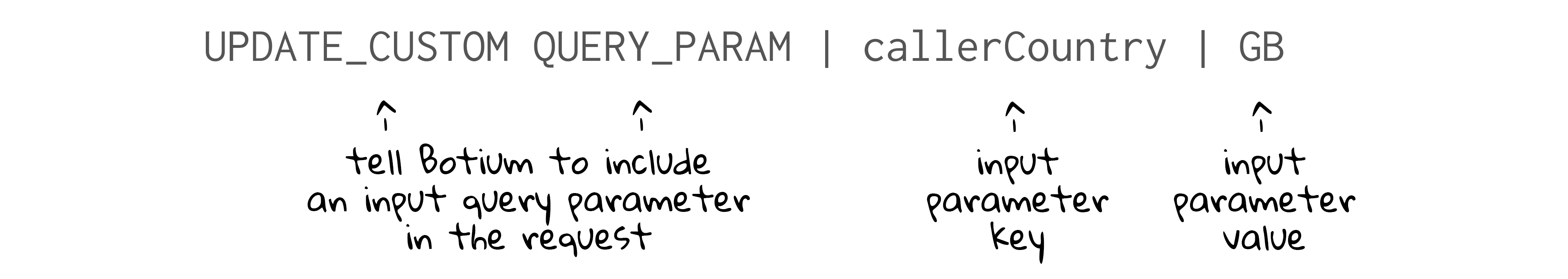

Send input parameters

To correctly test the behavior of our chatbots, we may have to send input parameters. We add the input parameters below the user inputs (#me) like this:

User: Hello!

UPDATE_CUSTOM QUERY_PARAM | callerCountry | GB

Bot: Hello. It's good to see you!

Let's inspect the part that is sending the input parameter:

The UPDATE_CUSTOM QUERY_PARAM functions tell Botium to send input parameters followed by a key and value pair, separated by pipes. If you want to send multiple input parameters from the same user input, you will have to create a new line for each input parameter, using the same syntax.

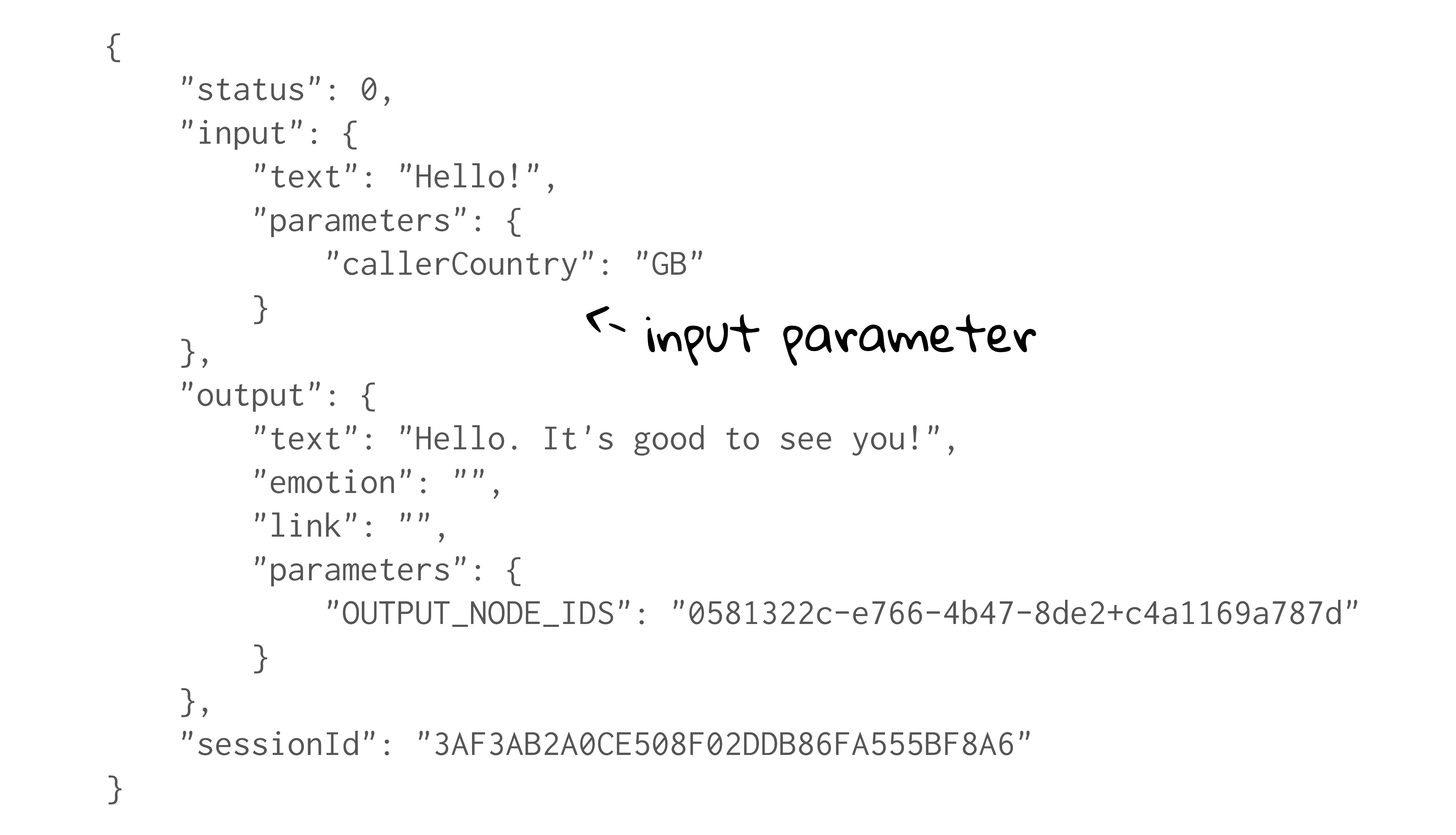

Below is an image that illustrates where input parameters, that were included in requests, appear in the engine response JSON:

Test output parameters

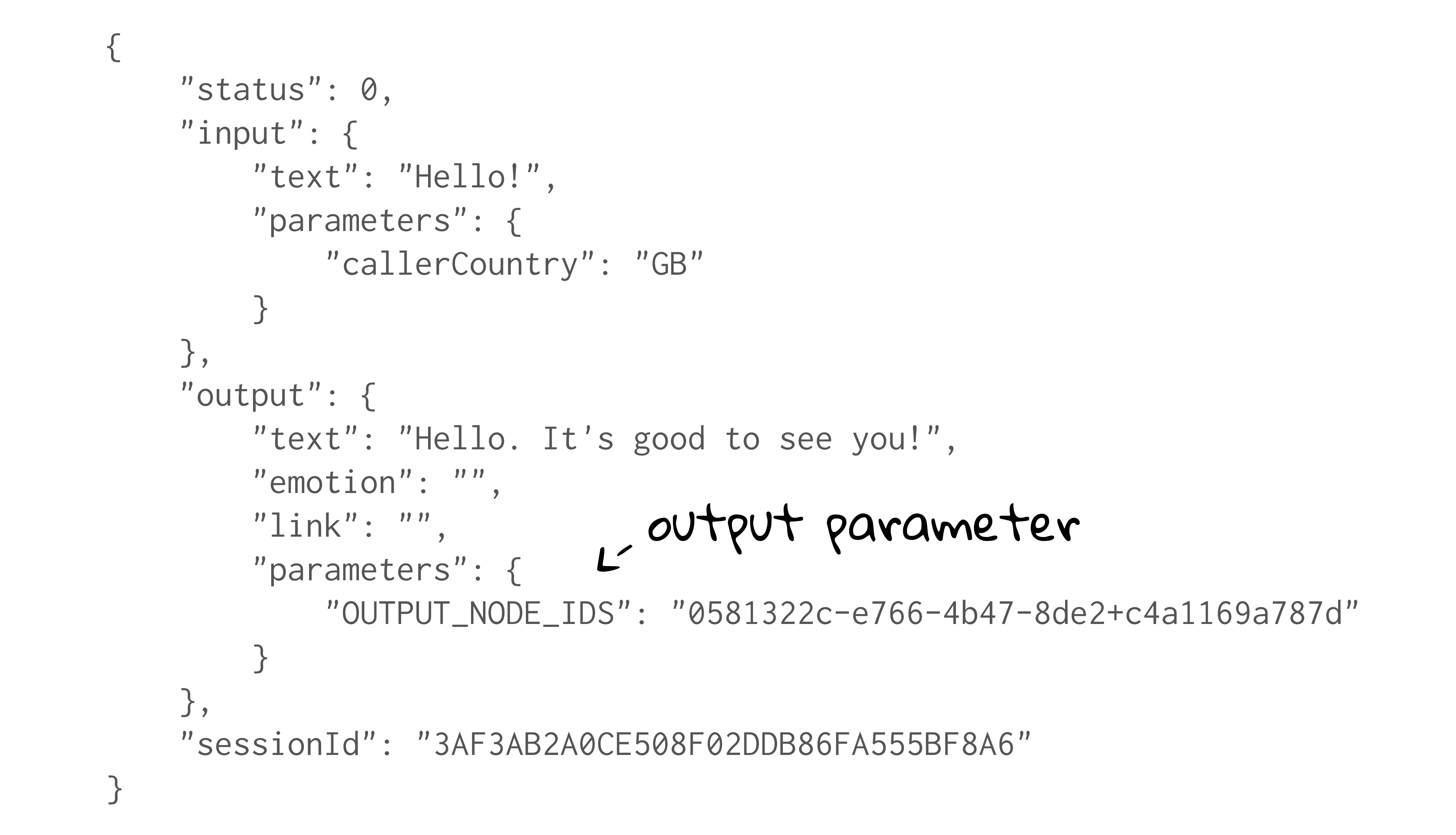

Sometimes you might want to evaluate parameters that are included in the engine response JSON, like output parameters:

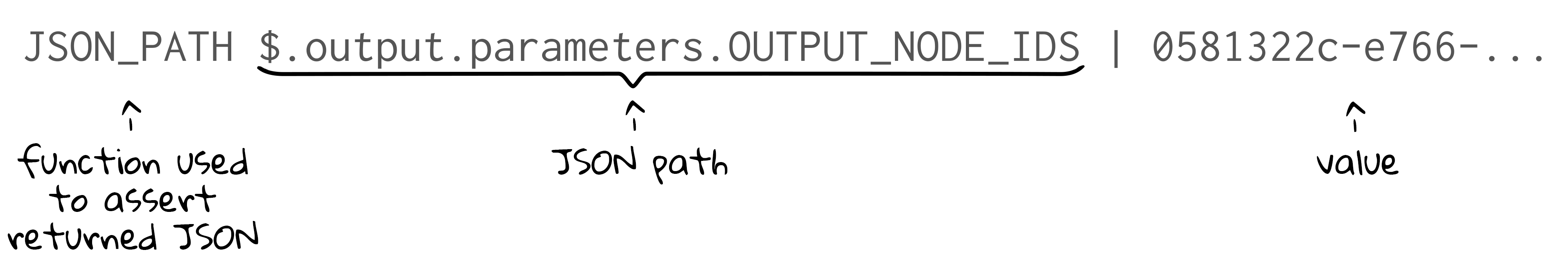

To test parameters included in the JSON response from Teneo, Botium offers a JSON path asserter. An example scenario that asserts the value of an output parameter called OUTPUT_NODE_IDS may look as follows:

User: Hello!

Bot: Hello. It's good to see you!

JSON_PATH $.output.parameters.OUTPUT_NODE_IDS | 0581322c-e766-4b47-8de2+c4a1169a787d

Let's inspect the part that checks the JSON path:

To evaluate the returned JSON, you use the JSON_PATH function along with:

- The path to the parameter you want to test

- The value that you want to assert

Note that in the example test scenario above, Botium will assert both the bot's text response (Hello. It's good to see you!) and an output parameter called OUTPUT_NODE_IDS. If you'd prefer to just test a JSON path, you can omit the expected text response. If you want to test multiple JSON paths in the same engine response, you should create a new line for each path, using the same syntax.

Test links

It's important to make sure that the links that your chatbot is returning are working as intended. To do this, you can use the link checker that's available in Botium.

User: What drinks do you serve?

Bot: We serve everything from flat whites to espressos. Please visit https://teneo.ai to see the full menu.

CHECKLINK 200

The CHECKLINK function validates URL's available in both the output text as well as the output's URL field. When testing the URL, Botium asserts if the HTTP response code it received, matched the value specified in the test scenario.

Other useful Botium features

Botium offers many more useful features for testing your bot and we encourage you to browse and explore Botium's own documentation to make the most of Botium.

Here is a list of some of the useful features that might be beneficial when you are composing tests for your Teneo chatbot:

- Text matching mode: as output nodes can contain dynamic parts, you can change the text matching model, for example, to allow the user of wildcards in test scenarios

- Partial conversations: create a subset of a conversation that you can reuse in your test cases

- Scripting memory: store a part of the bots response in a scripting memory variable and reuse it later in the same test case