iOS Chat

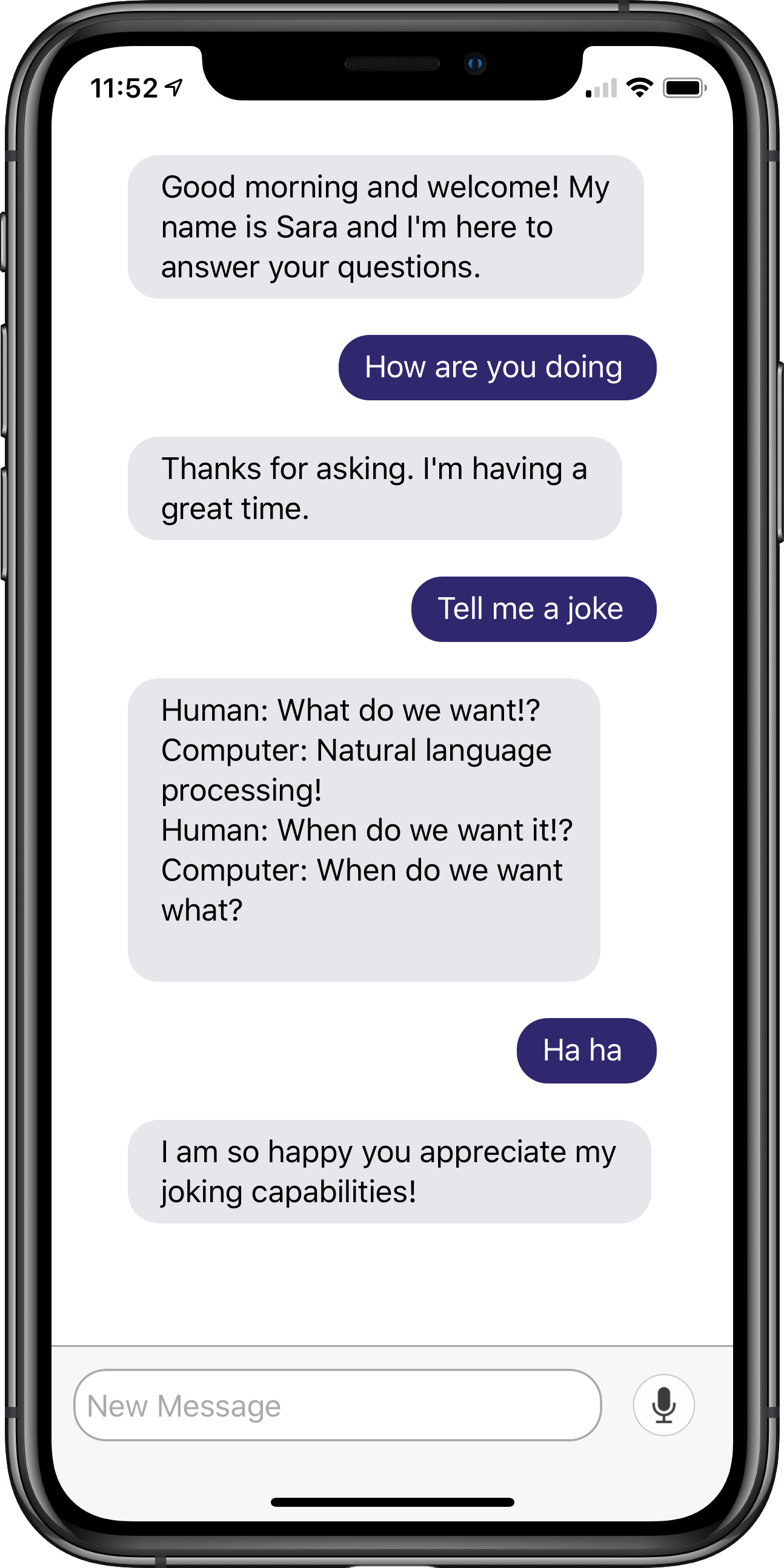

The Teneo iOS Chat app is an example iOS chat app for Teneo. It uses the Teneo iOS SDK and supports Apple's Speech Recognition as well as Text to Speech. Conversations are displayed in a user-friendly chat interface.

The project demonstrates the following concepts:

- Text input using the native iOS Speech Recognizer as well as manual text entry.

- Spoken responses using the native iOS Text to Speech (TTS) capability.

- A chat UI, based on MessageKit.

- Usage of the Teneo iOS SDK to interact with the Teneo engine.

You can find the source code of this project on Github.

Prerequisites

- You need to know the Teneo engine URL of your published bot.

- When running the app for the first time, grant microphone and Speech Recognition access to enable input by voice.

Installation

- Clone the repository from Github.

- Install the TIE dependency by running the following command in the project root folder:

pod install - Open the project in XCode by opening TieChatDemo.xcworkspace.

- Point the app at your bot's Teneo engine by setting the following variables in ViewController.swift:

- baseUrl, the base url of your engine, for example

https://myteam-4fe77f.bots.teneo.ai - solutionEndpoint, the path or endpoint of your engine, like /longberry_baristas_0x383bjp5a8e6tscbjd9x03tvb/. Note: make sure it ends with a slash (/)

- baseUrl, the base url of your engine, for example

Project details

TIE SDK connectivity

This project follows TIE SDK connectivity guidelines which are fully detailed in tie-api-client-ios SDK. This dependency in the Podfile file enables the app to use the TIE SDK and communicate with Teneo Engine.

The viewDidLoad method of the app initializes, among other things, the TieApiService and UI elements.

User inputs (texts) are sent to Teneo Engine with the helper method sendToTIE(String text, HashMap<String, String> params).

Additionally, user input received from the Speech Recognizer or the keyboard are posted to the Chat UI, and sent to Teneo Engine with this helper method consumeUserInput(userInput: String).

Speech Recognition

This project implements Apple's native iOS Speech Recognizer (ASR) with SFSpeechRecognizer. Behind other helper methods that validate app permissions and other conditions, sits the startAudioEngineAndNativeASR method, which performs two main tasks:

- Initialize an SFSRecognizer to a specific Language ('en-GB' by default).

- Initialize an audio engine, and feed streaming audio data into an ASR Request for processing.

Tapping the microphone button silences any Text to Speech playback before launching the Speech Recognizer. Transcription results are received at the didFinishRecognition delegate method, posted as a message bubbles into the Chat UI and finally sent to the Teneo engine for processing.

Text to Speech

Text to Speech (TTS) is implemented with Apple's iOS native AVSpeechSynthesizer. The object AVSpeechSynthesizer within the project is the center of voice synthesis, and is initialized, launched and released throughout the lifecycle. In this project, the method speakIOS12TTS( utterance:String)_ speaks the bot responses received from Teneo engine out loud.

Chat UI

The Chat UI and input bar are based on the MessageKit framework, but implemented as a self contained class inside this project. You can customize message bubble color, avatar, and sender in that class.